Bag Lady plus mutt

Part of Paul Day’s bronze frieze under his Meeting Place sculpture at the Eurostar station in St Pancras. Note the way the dog’s head has been fondly polished by passers-by.

Quote of the Day

”Never mistake activity for achievement.”

- John Wooden (famous basketball coach)

Applies to much of the ‘AI-slop’ currently being generated.

Musical alternative to the morning’s radio news

Tom Waits | Picture In A Frame

Long Read of the Day

How To Read A Novel: Or why fiction gives us an antidote to narrowband thinking.

Really thoughtful long essay by Steven Johnson. It was triggered by a question posed by Patrick Collison, the only known example of a civilised billionaire: why should we read classic novels?

I sketched out an answer to this question in my book Farsighted, arguing that novels (and fictional narratives in general) were extensions of the human mind’s marvelous aptitude for building simulations of potential events. It’s something we do so effortlessly that we rarely stop to think about how nuanced a skill it really is: creatively projecting forward into our possible futures based on our previous experience of the world. Narratives of all sorts allow you to parachute into other simulated experiences, which ultimately give you more data for your own simulations. But novels, I would argue, give you the richest simulation of the interior life of other people’s experiences: you get a ringside view of all that emotional and cognitive action. This is particularly true of the novel after, say, 1750 or so, when the novelists began adopting more of the inner monologues (both first-person and “close-third” perspective) that Shakespeare had explored on the stage.

It seems fairly obvious to me that there is practical utility in running these simulations. We accumulate wisdom that we can apply to our own lives by watching other people live theirs. Historical nonfiction—particularly if the author has access to the subject’s inner life through journals or correspondence—arguably has even more utility, in that the events in question actually happened in the real world, and not in the imagination of the novelist. (This is one reason I decided to write nonfiction instead of novels—the other being that I don’t think I’m a good enough writer to write novels.)

I’ve admired Johnson for ages, not just because he’s a marvellous non-fiction writer, but more recently because he played a lead role in the Google team that created NotebookLM — which IMO is the most useful and imaginative AI that we have (and one that I use all the time). So I think this essay is worth your time.

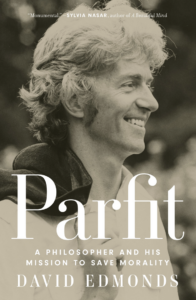

Books, etc.

Screenshot

Here’s the blurb:

The hardest choices are also the most consequential. So why do we know so little about how to get them right?

Big, life-altering decisions matter so much more than the decisions we make every day, and they’re also the most difficult: where to live, whom to marry, what to believe, whether to start a company, how to end a war. There’s no one-size-fits-all approach for addressing these kinds of conundrums.

Steven Johnson’s classic Where Good Ideas Come From inspired creative people all over the world with new ways of thinking about innovation. In Farsighted, he uncovers powerful tools for honing the important skill of complex decision-making. While you can’t model a once-in-a-lifetime choice, you can model the deliberative tactics of expert decision-makers. These experts aren’t just the master strategists running major companies or negotiating high-level diplomacy. They’re the novelists who draw out the complexity of their characters’ inner lives, the city officials who secure long-term water supplies, and the scientists who reckon with future challenges most of us haven’t even imagined. The smartest decision-makers don’t go with their guts. Their success relies on having a future-oriented approach and the ability to consider all their options in a creative, productive way.

My commonplace booklet

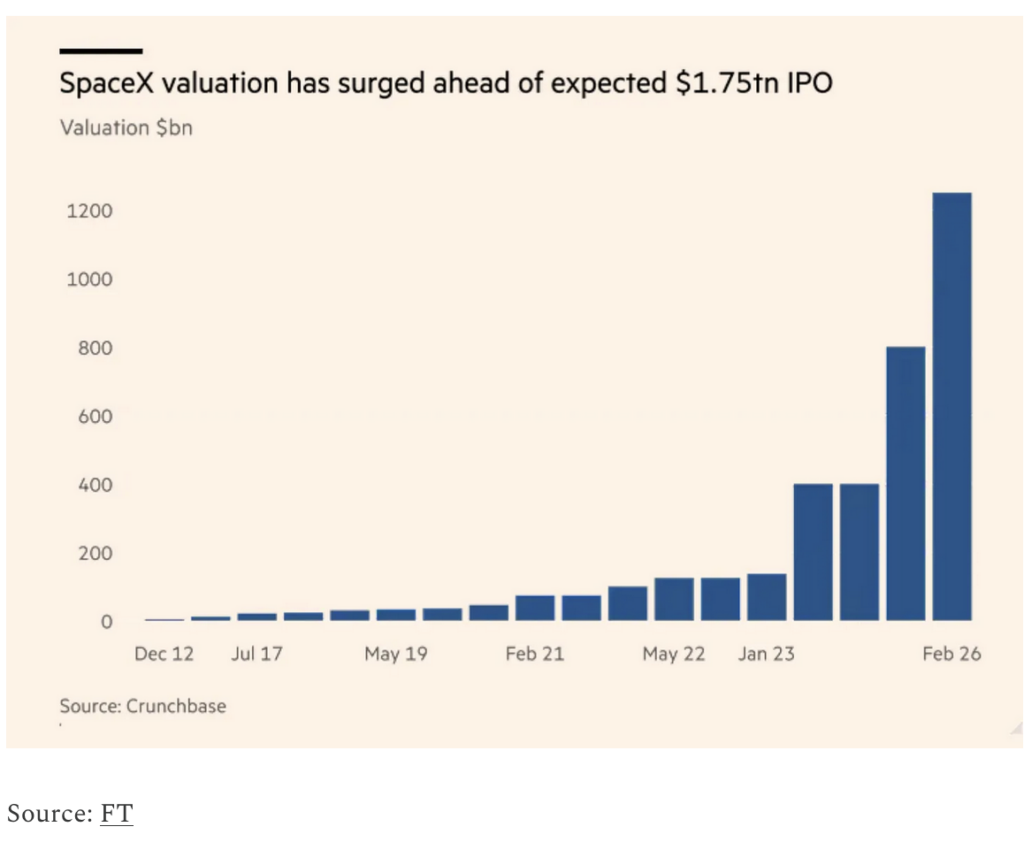

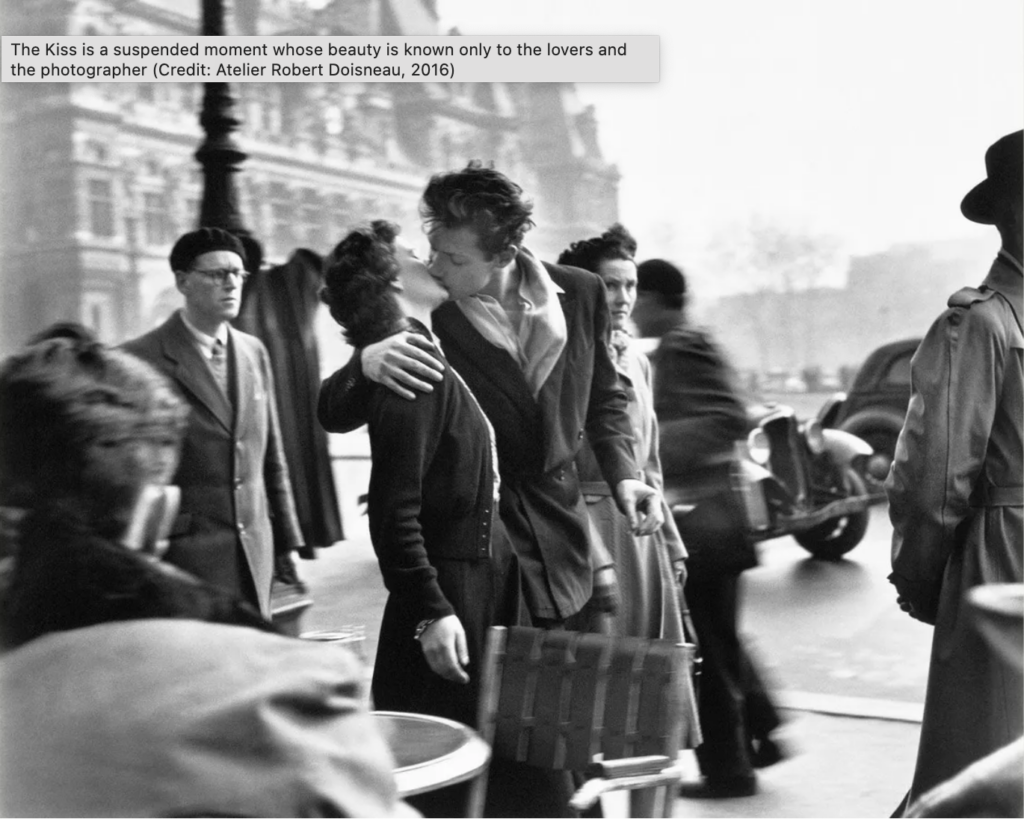

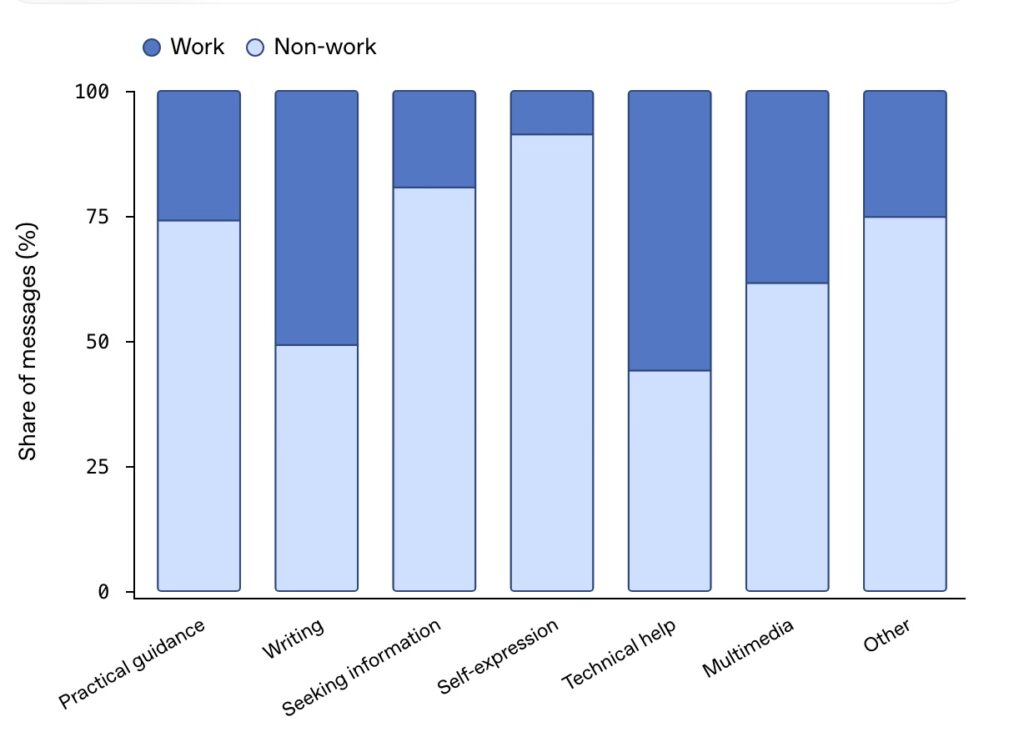

What Silicon Valley thinks of the rest of humanity

Most people I know in the A.I. industry think the median person is screwed, and they have no idea what to do about it. I live in San Francisco, among the young researchers earning million-dollar l salaries and the start-up founders competing to build the next unicorn. While Silicon Valley has long warned about the risk of rogue A.I., it has recently woken up to a more mundane nightmare: one in which many ordinary people lose their economic leverage as their jobs are automated away.

Most economists and A.I. experts do not expect [the most extreme] scenario, but the persistence of the permanent underclass idea should concern all of us. First, because it signals how much collateral damage the A.I. companies will tolerate en route to A.G.I. And second, because the production of a social underclass is a policy choice. Instead of waiting for impact, we need to think seriously — now — about how we plan to support workers through A.I. disruption.

- Jasmine Sun

This Blog is also available as an email three days a week. If you think that might suit you better, why not subscribe? One email on Mondays, Wednesdays and Fridays delivered to your inbox at 5am UK time. It’s free, and you can always unsubscribe if you conclude your inbox is full enough already!