Shaggy dog?

Ireland, December, 2025. Think of it as my homage to Martin Parr, the great celebrant of ordinary life, who died this year.

Quote of the Day

”One afternoon, when I was four years old, my father came home, and he found me in the living room in front of a roaring fire, which made him very angry. Because we didn’t have a fireplace.”

- Viktor Borge

Musical alternative to the morning’s radio news

The Beatles | Lady Madonna

One of my favourite tracks.

Long Read of the Day

A Positive Sign for Flying in the Future…

Remarkable story from James Fallows, a great American journalist and an ultra-knowledgeable aviation-geek.

Here’s how it opens:

One week ago, something happened for the first time in the century-plus history of civilian air travel. An airplane whose systems detected a problem with its human pilot found its own way toward a suitable airport not in its original flight plan…

Read on. In a way it puts concerns about self-driving cars in perspective.

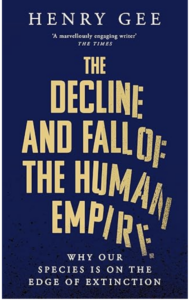

Books, etc.

If you’re puzzled why a book published nearly half a century ago is suddenly flying off booksellers’ shelves, then join the club. But John Self’s thoughtful piece about it in the Observer made me think that maybe I should have a look.

The story of So Long is simple on the surface. An unnamed narrator in old age – ostensibly Maxwell himself – is looking back to his childhood in rural Illinois in the 1920s. He is traumatised by the death of his mother in the 1918 flu epidemic and by the loss of a friendship following a terrible crime.

The immediate appeal of the book is in its deep hooks: there is a murder (a pistol shot rings out on the first page), a love triangle, the impenetrable mysteries of the human heart. But the novel is also about shame and atonement and … about “what it means to have lived an adequate life”… <hr<

My commonplace booklet

On deathbed advice/regret

Interesting blog post

A common social media trope is posting advice from people on their deathbed. Usually about things they didn’t do. “I should’ve been more there for my loved ones” is a classic tune, “I should’ve cared less about what other people think” is another hit, usually culminating in the banger conclusion of “I should’ve done [a super specific personal thing like opening up a hobbyist store or buying a house in their favorite hinterland].”

I don’t value this kind of advice much, it’s too cheap. Just like complaining is just so cheap…

Yep. Although I liked the undertaker who once said that he never heard a dying person say that the wished they’d bought more stuff.

Feedback

The O Sole Mio story continues…

Chronis Tzedakis writes:

On the matter of the Venice gondoliers’ choice of tunes, I too remember the ‘Just One Cornetto’ advert, though not with too much affection. Apparently purists shudder at the sound of ‘O Sole Mio’ in Venice, since it is a Neapolitan song. According to anecdotal evidence, an American tourist paid a gondolier to sing it and they have obliged ever since.

This Blog is also available as an email three days a week. If you think that might suit you better, why not subscribe? One email on Mondays, Wednesdays and Fridays delivered to your inbox at 5am UK time. It’s free, and you can always unsubscribe if you conclude your inbox is full enough already!